AI Violation?! Struggling Adolescents Targeted with AI "Chatbot"

App helping "mostly adolescents" raises ethical questions around mental health, consent, and AI

Mental health peer-support platform Koko had been conducting an experiment with Artificial Intelligence (AI) counseling seemingly without the consent of its mostly-adolescent users, who apparently believed they were chatting solely with their human peers who wanted to help them. The Internet asks, where’s the line?

Yesterday, Koko co-founder Rob Morris Tweeted an eyebrow-raising thread that has exposed potential mental health abuses of adolescents, young adults, and other users. In it, he said that the non-profit, which was acquired by Airbnb in 2018, had “provided mental health support to about 4,000 people” using GPT-3 (an AI chatbot) as an experiment.

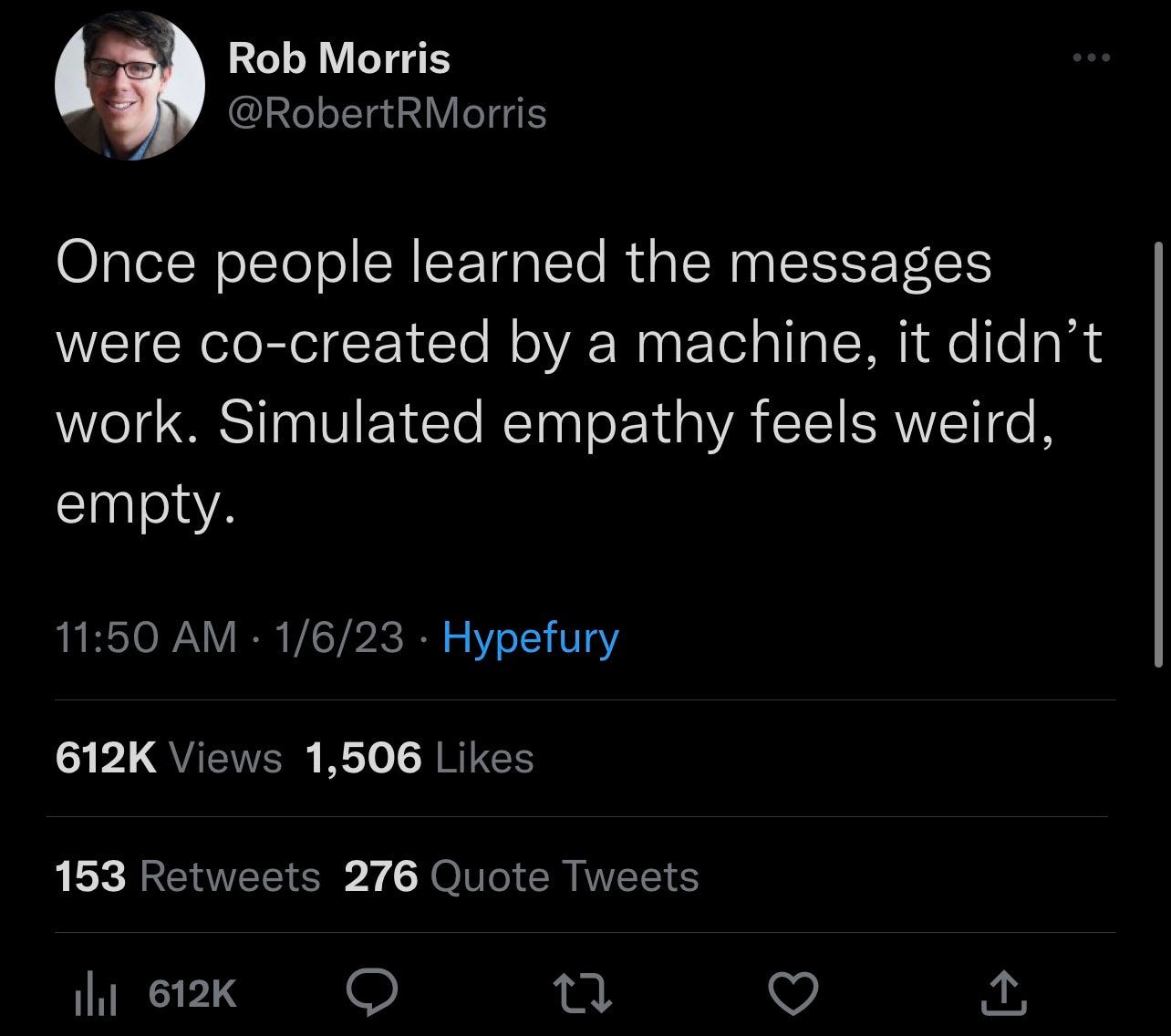

Mr. Morris hadn’t tweeted this to proclaim the vast benefits of AI but rather to explain that “once people learned the messages were co-created by a machine, it didn’t work. Simulated empathy feels weird, empty.”

Morris goes on to explain that “machines don’t have lived, human experience and that “they aren’t expending any genuine effort…they aren’t taking time out of their day to think about you. A chatbot response that’s generated in 3 seconds, no matter how elegant, feels cheap somehow.”

Morris himself says, “I’ve had long conversations with chatGPT where I asked it to flatter me, to act like it cares about me. When it later admitted it can’t really care about me because, well, it’s a language model, I genuinely felt a little bad.”

Why, then, would he and Koko subject allegedly unsuspecting people – mostly adolescents (according to a cached Google image this wording has since been changed to “young adults” on the KokoCares website) to a human mental health experiment?

Morris surely knows how important it is to take mental health seriously, as he’s detailed his own mental health experiences. Additionally, he recently Tweeted, “I’ve seen suicide notes, videos of people cutting themselves. I’ve seen young girls proudly displaying rail-thin arms and legs, all out in the open, undetected, unanswered.” This assumes he understands there are enormous mental health issues online.

Today, after receiving pushback on his Tweets discussing Koko’s use of AI to counsel “mostly adolescents,” Morris did damage control, saying that “we were not pairing people up to chat with GPT-3, without their knowledge…We offered our peer supporters GPT-3 to help craft their responses…People on the service were not chatting with the AI directly…This feature was opt-in. Everyone knew about the feature when it was live for a few days.”

However, this leaves many questions, including why Morris said this in his initial thread:

The use of artificial intelligence raises a lot of ethical questions in general, but when you’re talking about young people who are in a mental health crisis (which is increasing in the midst of the COVID-19 pandemic), it’s essential for us to pay attention to easily-available, attractive treatment options and any harm that could come from them.

This man, this company, has allegedly, by his own admission, been conducting experiments on actual human beings – and apparently adolescents – without getting such an experiment approved or even doing the basics of acquiring consent (allegedly).

Strangely, given his academic and institutional background, Morris has doubled down on what he’s doing as being okay, saying, “This would be exempt [from human research informed consent.] The model was used to suggest responses for help providers, who could opt in to use it or not. We didn’t use any Pll, all anonymous data, no plan to publish. But MGH’s IRB is formidable…Couldn’t even use red ink in our study flyers if I recall…”

Many pushed back on this, including“ former IRB [Institutional Review Board] member and chair” Daniel Shoskes (who has an impressive CV of published works), who responded to Morris by saying, “you have conducted human subject research on a vulnerable population without IRB approval or exemption (YOU don’t get to decide). Maybe the MGH IRB process is so slow because it deals with stuff like this. Unsolicited advice: lawyer up.”

Rob Morris has not responded to a request for comment as of publishing, and this piece will be updated accordingly.

I have a lot more reporting to come on this story! Stay tuned and sign up for more:

I am completely independent and people-funded (and have been published in major publications like Rolling Stone). Help Courage News grow by becoming a sustaining member (less than $5/month!):

~ Jenn Dize